Postgres Distributed: A Native Path Forward for OpenAI’s Database Layer

OpenAI’s PostgreSQL Scaling Tradeoffs

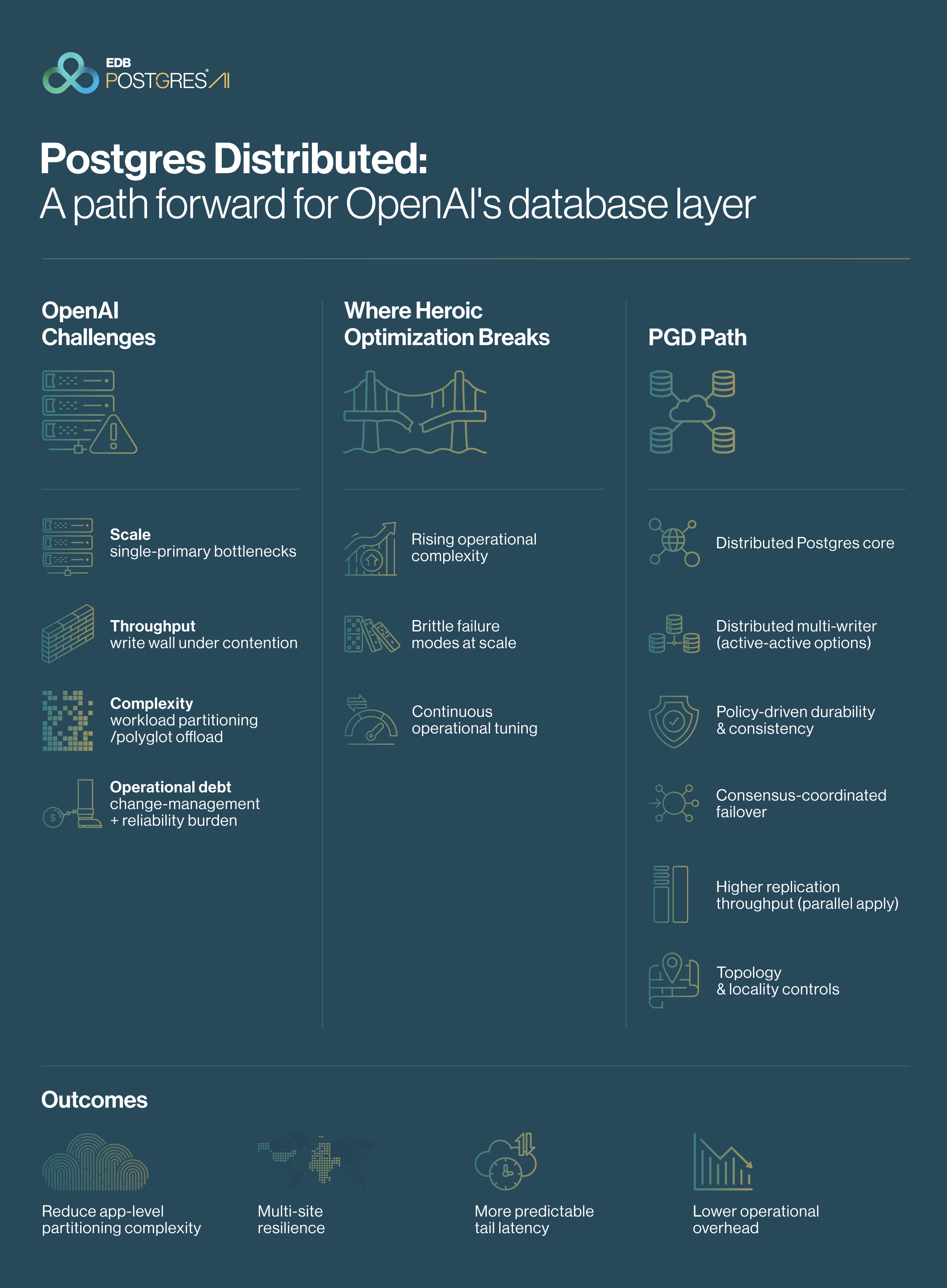

We have analyzed OpenAI’s current database architecture supporting 800 million+ users and the scaling trade-offs their engineering team has publicly shared as they stretch PostgreSQL to meet sustained global demand. EDB’s Jozef de Vries has outlined Why OpenAI Should Use Postgres Distributed is the pragmatic next phase for this kind of trajectory. This article summarizes that case and introduces how EDB Postgres Distributed (PGD) (also referred to as Distributed HA; historically BDR) supports multi-site resiliency and predictable operations without abandoning PostgreSQL.

To recap, OpenAI has achieved a "management miracle" by scaling a Single Primary architecture on Azure Flexible Server through extreme operational discipline, specifically "strict SQL reviews," "5-second schema timeouts," and a "polyglot" approach to offloading write-heavy data to Azure CosmosDB.

This operational complexity stems from the architectural limits of Physical Streaming Replication (PSR), which restricts flexible scale-out and introduces fragility during failovers. PSR fundamentally relies on exact block-level consistency, making it impossible to control replication lag dynamically or execute DDL changes without disruptive "big bang" restarts. This forces your team to rely on complex "application-level sharding" and sophisticated monitoring to prevent SEV-0 outages, suggesting the current relational system is operating at its physical ceiling.

EDB proposes a transition from this fragile equilibrium to a Distributed PostgreSQL architecture designed for high availability (HA), performance, and resilience at a global scale. By decoupling data movement from the physical storage layer and adopting a native Active-Active architecture, OpenAI can reduce application-level workload partitioning/polyglot offload complexity and simplify operational overhead for failover and maintenance, addressing the underlying drivers of replication lag sensitivity and schema-change rigidity with an automated, self-healing distributed system.

What is Postgres Distributed (PGD) (also referred to as Distributed HA; historically BDR)?

EDB Postgres Distributed (PGD) is a fully integrated stack that transforms standard PostgreSQL into a distributed, always-on database platform (see PGD documentation). It enables continuous business continuity through Active-Active replication and simplifies distributed data durability and consistency using predefined durability profiles (Commit Scopes) that replace complex configurations. Designed for the modern enterprise, PGD supports granular data locality strategies, automated conflict management, and seamless lifecycle management, while keeping applications online across all active nodes in regions.

We recognize that moving to a distributed architecture requires logical replication, which traditionally introduces severe CPU overhead by decoding the WAL for every subscriber and distributed complexity. However, PGD is not standard logical replication. It provides a re-architected distributed engine that natively eliminates these historical penalties by performing logical decoding only once on the source node and sharing the resulting Logical Change Records (LCRs) across all streams. Furthermore, it manages scale and complexity natively using Parallel Apply for 5x faster throughput and embedded Raft consensus for deterministic routing and conflict resolution.

We have mapped OpenAI’s published pain points into five specific categories where PGD provides native resolution.

I. Traffic Amplification & Load Spikes

Current Challenge: When a single primary node reaches capacity, response times increase, triggering application-level retries that multiply incoming request volume. Even with PgBouncer deployed to pool connections, sudden traffic spikes and idle transactions can still overwhelm the system. This leads to connection storms that ultimately exceed Azure's hard max_connections limit of ~6K, causing cascading outages where the database spends more resources managing process overhead than executing queries.

The PGD Solution:

- Integrated Connection Manager: Unlike external sidecars (PgBouncer), PGD 6 uses a built-in Connection Manager that runs as a background worker. EDB has deep expertise in PostgreSQL connection management, including long-standing contributions to the PgBouncer ecosystem. That experience informs PGD’s integrated approach to connection handling under bursty traffic, failovers, and retry storms. Despite our deep investment in PgBouncer, we designed PGD’s native Connection Manager to specifically solve the distributed routing and connection storm (failover/retry spike) that external sidecars (a separate proxy/pooler like PgBouncer/HAProxy deployed alongside the database) cannot effectively handle.

- Active-Active Architecture: By distributing write operations geographically to different lead writers (the write-authoritative node for a region/data group), PGD absorbs connection surges across the mesh rather than funneling them to a single primary.

II. Query & Application Behavior

Current Challenge: Inefficient query patterns, such as the complex 12-table ORM joins your team has documented, can easily saturate CPU and cause cluster-wide latency. Today, you are forced to mitigate these "killer queries" and "noisy neighbors" through time-consuming manual SQL reviews, strict workload isolation, and reactive monitoring to prevent queries from bringing down the service.

The PGD Solution:

- Conflict-Free Replicated Data Types (CRDTs): In a single-primary system, high-velocity updates to shared data (like global counters or session states) create 'hot rows,' forcing thousands of concurrent transactions to wait in line for a single database lock. PGD eliminates this severe performance bottleneck using CRDTs. It allows multiple nodes to update the same row simultaneously without global locking, merging the values in the background to ensure zero data loss and uninterrupted write throughput.

- Advanced Workload Isolation (Beyond Physical Replicas): While OpenAI currently offloads reads to PSR replicas, physical replication enforces a rigid, byte-for-byte clone. PGD’s logical Subscriber-Only Nodes transform your database from a rigid read-only mirror into an intelligent workload accelerator. This allows developers to execute local write transactions for complex reporting, meaning you can build custom indexes, materialized views, and temporary tables directly on the replica to optimize ORM-generated 'killer queries' on the fly. Combined with selective replication sets, this fully decouples 'noisy neighbor' workloads, ensuring heavy reporting never risks the stability or performance of your primary transactional engine.

- Tiered Tables for Historical Analytics: For heavy analytical queries that scan massive datasets (e.g., telemetry logs or old session histories), PGD integrates with the EDB Postgres Analytics Accelerator to offer Tiered Tables. This transparently offloads 'cold' historical data to cost-effective object storage in columnar formats. Complex analytical queries are seamlessly routed to a dedicated Vectorized Query Engine that operates up to 30x faster than standard Postgres. This completely isolates 'noisy neighbor' analytical workloads from the transactional engine, eliminating data bloat while allowing the application to query a single, unified table.

III. Maintenance Contention and Storage Engine Overhead

Current Challenge: The PostgreSQL single-write architecture is prone to maintenance-driven downtime. Operations like VACUUM FULL or REINDEX trigger exclusive locks that block application queries, while the resulting surge in WAL replication bloat saturates I/O. This forces your engineering teams into extensive manual workarounds, such as enforcing a strict 5-second timeout on schema locks simply to prevent background maintenance from paralyzing live user traffic.

The PGD Solution:

- Rolling Online Maintenance: PGD leverages its active-active nature to decouple maintenance from application availability. High-impact operations (such as VACUUM FULL, REINDEX, or patching) are executed on a per-node basis, while traffic is dynamically diverted to peer nodes. This transforms blocking maintenance into a routine background task, completely eliminating the need for scheduled downtime windows while protecting your application's strict latency budgets.

- Zero-Impact Background Tasks: Because PGD operates on logical replication rather than physical byte-streaming, maintenance operations like VACUUM FULL or REINDEX can be performed independently, node-by-node. These activities remain strictly local to the specific instance. By dynamically routing application traffic to active peer nodes during these tasks, PGD mechanically eliminates the need for scheduled maintenance windows without locking the global cluster or stalling the write path.

IV. Architecture & Replication Scaling Limits

Current Challenge: Managing ~50 global replicas creates immense pressure on the Primary node’s egress bandwidth (WAL shipping). OpenAI currently uses "Cascading Replication" (daisy-chaining) to survive, which introduces replication lag risks and topology fragility if an intermediate node fails.

The PGD Solution:

- Optimized Replication Efficiency: While your current physical replication requires zero decoding, it locks you into a single-primary model. Moving to a distributed architecture often implies logical replication and multi-writer designs, which are frequently criticized for (1) fan-out decoding overhead (decode per subscriber) and (2) operational complexity as the number of nodes grows. PGD eliminates this penalty by performing logical decoding only once on the source node and sharing the resulting stream across all replicas. The business value is clear: you can scale your read fleet horizontally without linearly increasing CPU and egress bandwidth on your primary node. This allows you to retire fragile cascading replication (daisy-chaining) topologies, reducing infrastructure overhead while safely sustaining massive traffic.

- Accelerated Replication Throughput: Standard PostgreSQL replication applies changes serially (single-threaded), creating a bottleneck where replicas cannot keep pace with a primary node processing high-velocity write traffic. PGD overcomes this by analyzing transaction dependencies and applying non-conflicting changes in parallel across multiple threads. This results in up to 5x faster replication throughput, and it eliminates the replication lag that causes 'stale reads' across your global replica fleet, ensuring your users always interact with real-time, consistent data even during massive application write surges.

- Integrated Consensus & Failover: Azure Flexible Server and standard HA solutions rely on external consensus stores (e.g., etcd a key-value store) or on monitor-driven coordinators to manage failover. This creates a complex dependency chain where database availability hinges on the speed and accuracy of a separate system. PGD eliminates this "bolted-on" complexity by embedding Raft consensus directly into the database engine. This ensures deterministic leadership elections and automatic traffic rerouting without the latency or "split-brain" risks inherent in external monitoring tools.

- Optimized Read Scaling: To support massive read fleets without saturating the primary's network, PGD utilizes a Hierarchical Mesh topology for Subscriber-Only groups. A designated "Group Leader" receives a single stream of updates from the primary and redistributes them locally to other subscribers. This is architecturally critical: it completely insulates the primary node from CPU and egress-bandwidth saturation typical of massive WAL fan-out. This allows you to scale your read fleet linearly and retire fragile cascading (daisy-chaining) replication topologies.

V. Operational Change Management

Current Challenge: To prevent global database lockups, OpenAI enforces a 5-second rule that automatically kills any schema migration that exceeds that window. While this protects the primary from long-duration AccessExclusiveLocks, it places a severe constraint on engineering velocity, forcing teams into complex manual workarounds just to execute routine schema updates.

The PGD Solution:

- Rolling Schema Updates: Unlike physical replication, which requires identical schemas on all nodes, PGD accommodates temporary schema differences across the cluster. This allows you to perform schema changes and major version upgrades in a rolling fashion by updating one node at a time while the application remains online and eliminating the "all-or-nothing" fragility of your current 5-second maintenance window.

- Zero-Downtime Major Upgrades: Because PGD utilizes logical replication, it natively supports mixed-version clusters. This allows you to perform major version upgrades (e.g., PG 15 to PG 16) in a rolling fashion, updating one node at a time while the rest of the fleet continues to serve traffic seamlessly. For your team, this completely eliminates extended maintenance windows and the operational burden of rebuilding a 50+ node replica fleet from scratch, allowing you to modernize your database engine without sacrificing global availability.

VI. Portability and Cloud Independence

Current Challenge: Current reliance on Azure Database for PostgreSQL (Flexible Server) and Azure Cosmos DB creates a strategic dependency on a single hyperscaler. While Azure provides robust managed services, this "PaaS Lock-in" tightly couples OpenAI’s data plane to specific Azure networking constructs, storage limits, and regional availability zones.

As OpenAI looks towards ambitious infrastructure frontiers such as the Stargate project with Oracle or expansion into hybrid compute environments, this rigid tether to Azure prevents the data layer from following the compute. To truly scale, your data plane must be as portable as the models themselves, moving beyond a single cloud's regional limits to support a truly multi-cloud, high-performance infrastructure strategy.

The PGD Solution:

- Infrastructure Independence: PGD is purely software-defined. Unlike Azure Flexible Server, PGD runs identically across Azure, AWS, Google Cloud, Oracle Cloud (OCI), on-premises bare metal, and Kubernetes. This architectural decoupling gives OpenAI the absolute flexibility to deploy the database cluster wherever your compute resides, ensuring your data layer remains highly portable and completely free from provider lock-in.

- Seamless Multi-Cloud Operations: PGD natively supports multi-cloud and hybrid architectures. Establishing a single logical cluster that spans Azure (for existing workloads) and Oracle Cloud/Stargate (for new massive compute clusters), replicating data in real-time between them. This capability provides leverage for cost arbitrage between cloud providers and ensures business continuity even in the event of a total provider-level outage.

- Kubernetes-Native Operators: With EDB Postgres for CloudNativePG Cluster (CNPG) and the Global Cluster (PGD Operator), your database infrastructure can be managed declaratively alongside your microservices. This aligns with modern infrastructure-as-code practices, allowing you to treat the database as a portable data service that scales and moves across clusters in lock-step with your compute fleet. With a unified operational model, it eliminates the friction of managing stateful databases differently from stateless applications, significantly accelerating engineering velocity while stripping away the operational overhead of proprietary cloud APIs.

- Data Sovereignty Compliance: As global AI regulation tightens, PGD’s geo-sharding capabilities allow you to keep specific user data (e.g., EU data) pinned to servers within specific borders (geo-fencing) while still maintaining a unified global schema. This granular control over data placement is difficult to achieve with standard PaaS offerings without fracturing the application into isolated regional silos. By using PGD, you can strictly enforce data residency and regulatory compliance at the database layer, sparing your engineering teams the architectural debt of managing fragmented, region-specific deployments.

Conclusion

The Shift: From Heroic Workarounds to Architectural Sovereignty

OpenAI has proven that a monolithic PostgreSQL core can support 800 million users, but only through labor-intensive "heroic" interventions and manual workarounds. Your current architecture is in a transitional state, defined by mounting operational debt, a system held together by engineering effort rather than by native design.

By adopting EDB Postgres Distributed (PGD), OpenAI can transition from manual optimization to a native distributed architecture:

- Eliminate Polyglot Fragmentation: Stop the practice of offloading high-velocity writes to isolated NoSQL systems like Azure Cosmos DB. PGD allows you to bring these workloads back into a unified, natively distributed PostgreSQL environment via high-performance multi-master replication.

- Deterministic Resiliency: Replace the guesswork of external, reactive monitoring with the proactive mathematical certainty of embedded Raft consensus. By providing leadership election directly within the database engine, you eliminate the split-brain risks and dependency chains inherent in external coordinators.

- Proven at Tier-1 Scale: EDB’s distributed patterns are not theoretical; they form the backbone for the world’s largest global financial institutions and payment networks, handling extreme throughput with strict "five-9s" availability requirements.

- Total Infrastructure Portability: Break the hyperscaler tether. Whether you are optimizing on Azure today or expanding to Oracle’s Stargate Supercluster tomorrow, EDB PGD runs everywhere on-premises, hybrid, or multi-cloud, ensuring your data layer follows your compute, not your provider.

In summary, by transitioning from heroic manual optimization to native distribution, OpenAI can reclaim its engineering velocity and deploy a truly resilient, self-healing data platform.