PgAdmin Tests New QA Testing Framework

An established-yet-still-growing trend in software development is the use of a testing framework that allows the automated execution of unit tests to determine whether various code paths are working as expected under various circumstances. Each test case describes a test that must be run on an application to verify that the application runs as expected.

A well-designed testing framework is:

1. Application independent; and

2. Easy to expand, maintain, and perpetuate.

PgAdmin 4 boasts a very modular and pluggable architecture, so having a pluggable test framework for pgAdmin 4 was a no-brainer. The Quality Assurance Team at EnterpriseDB® (EDB™) developed the test framework for pgAdmin 4, creating a design that allows pgAdmin developers to easily add test cases and run the test suite in a jiffy.

EDB was instrumental in the development of pgAdmin 4. For example, read the blog by Dave Page, who is both EDB Vice President, Chief Architect, Tools and Installers, and Project Lead for pgAdmin. PgAdmin v.1 was released into Beta on Friday, June 10. Find details here.

Test Strategy

By providing a test infrastructure, the EDB’s QA team is helping pgAdmin developers easily produce tests that will exercise their code and prove its stability on any of the supported platforms. Each developer can extend and enhance the range of tests available on an ongoing basis; whether they’ve enhanced functionality, improved performance, or in response to a bug.

Using the standard public exposed API, test cases will be clearly defined for the verification of the functionality of each module. Each module has a different set of APIs; each API has different calls:

- GET

- POST

- PUT

- UPDATE

- DELETE

Initially, each module will include test cases covering the GET, POST, DELETE, and UPDATE methods.

Python unit test scripts using the unit test framework are defined for each module. For each test case, the pgAdmin servers/tests/ directory contains the following separate files:

- test_server_add.py

- test_server_delete.py

- test_server_get.py

- test_server_update.py

Tests can easily be executed across various permutations of platform vs. supported browsers, with no additional test setup.

The pgAdmin Testing Framework

The pgAdmin testing framework is an execution environment that runs automated tests for pgAdmin modules and nodes that are distinct blocks of code. The testing framework provides:

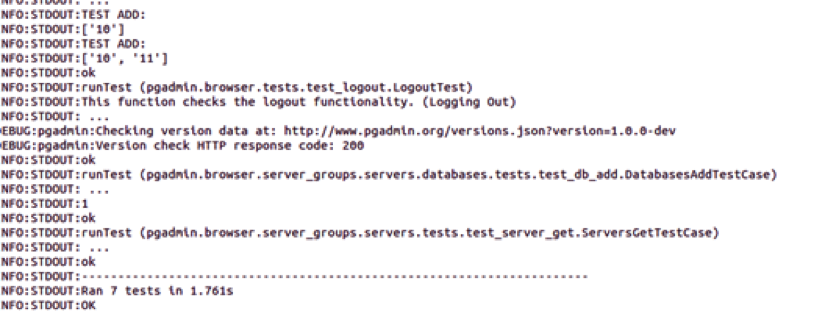

- a centralized and standardized logging facility, resulting in self-explanatory test-output:

- a mechanism to drive the application under test (pgAdmin).

- a mechanism that will execute each test:

- a mechanism that reports summary results:

The testing framework is part of the pgAdmin code, so any given checkout is accompanied by a working and up-to-date set of test cases.

The individual treeview nodes and pieces of functionality in pgAdmin are highly modularized (generally known as nodes and modules). To make the test framework flexible enough to maintain the level of modularity and pluggability, we dynamically populate the test schedule based on which module/modules the user is testing. To aid with this, each module includes its own tests in a tests/ subdirectory.

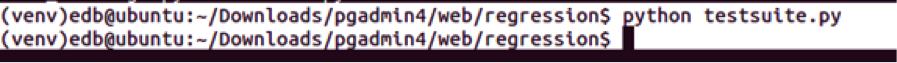

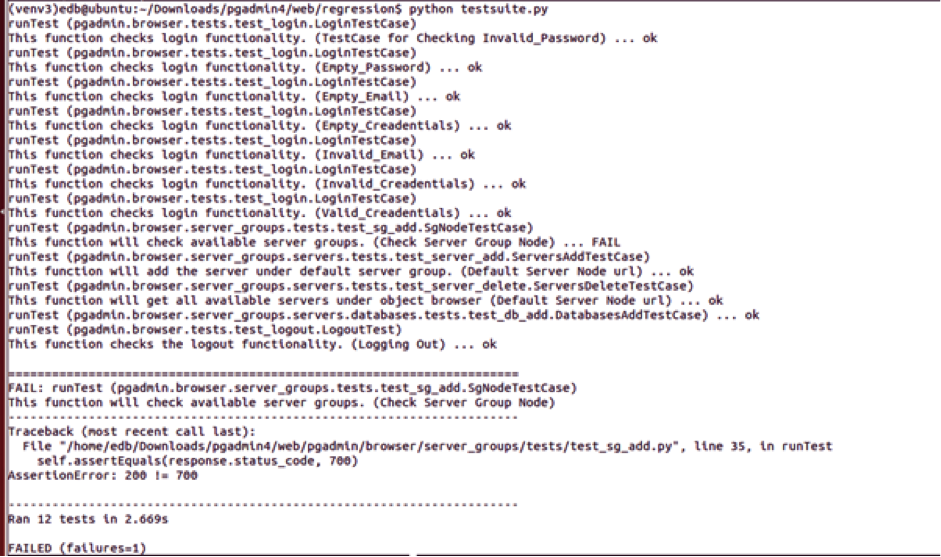

In the regression folder (which can be found in $SRC/pgadmin/web/regression) there is a script called testsuite.py that is responsible for execution of the tests in the schedule.

This file requires the following input:

- test_config.json contains the required configuration values for a database server to test against (test_config.json.in can be used as a template). The configuration file includes details such as user, password, database, port, etc.

- Each script takes input on which node/module for which to execute tests – a single module, comma separated list of modules, or the default (which is to include all modules).

- If required, additional test data/prerequisite files will be executed by the test framework before the tests for each module are called.

How do we populate the test schedule?

pgAdmin dynamically loads modules at startup. We use the same mechanism to populate the test schedule, except that we omit any nodes/modules that do not have a tests/ subdirectory.

For each node/module with a tests/ directory, we add all the test cases we find to the schedule. At present it is not possible to run selectively individual tests in a module, but this capability may be added in the future. For modules/nodes without a tests/ directory, a warning is displayed to prompt the developer to add tests for that module/node.

Once the sequential test execution starts, the next step is to compare the test result output of each test and accordingly mark the test as PASS or FAIL. A test summary and statistics are printed at the end of each execution of the suite.

Future Enhancements

In the future, to run the test cases against multiple database versions, we plan to populate each test-schedule file with the names of the test cases that are supported on the server. Fine-grained control of the content of each test schedule is planned for phase 2 of the testing framework implementation.

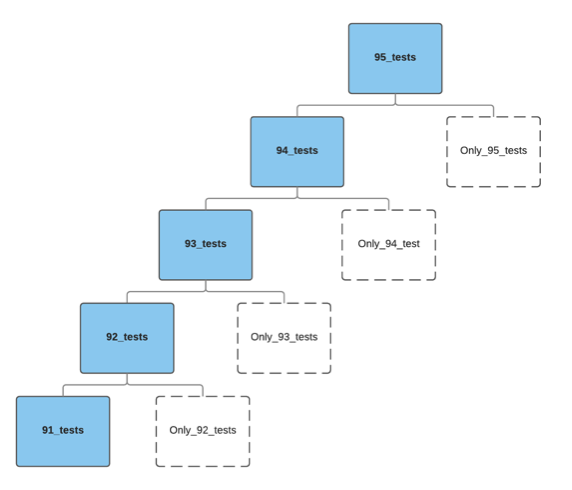

The logic for building test-schedules dynamically that will operate on one or more database server versions will be key. For example, the suite may contain test-schedules that are named:

- only_92_tests (one or more tests that would only run on 9.2)

- 92_tests (tests that run on 9.2 and above)

- only_93_tests

- 93_tests

- only_94_tests

- 94_tests

- etc.

Within the suite, each test-schedule lists the series of tests that will execute for that particular database version. Initially, the schedule file will contain tests that exercise basic functionality of each version.

The test-schedule file will list the series of tests we want to execute for that particular database version. The default contents of the schedule file would comprise some determined base tests to start with.

This scheme will require further refinement as pgAdmin also supports some derivatives of PostgreSQL—for example, the EDB Postgres Platform—that will require additional tests that will not execute on PostgreSQL.

Kanchan Mohitey is Senior Manager, Quality at EnterpriseDB.