Postgres Vision 2022 Session Spotlight: Oracle to PostgreSQL Migration Tales from the Field

With exorbitant pricing and restrictive licensing, Oracle databases are becoming less and less popular among businesses invested in innovation and infrastructure modernization. Increasingly, these organizations are seeking out database alternatives that will provide them with the flexibility and control that commercial offerings cannot.

As such, many are turning to Postgres.

However, major database migrations can be a daunting undertaking, especially given the major changes that industries and markets have witnessed in the last few years. This is why EDB is dedicated to providing businesses considering a migration to Postgres the resources and expertise necessary to seamlessly move their mission-critical data and applications and take advantage of a database that truly suits their needs. Latest among these resources is one of the highlights from Postgres Vision 2022, entitled “Oracle to PostgreSQL Migration Tales from the Field.”

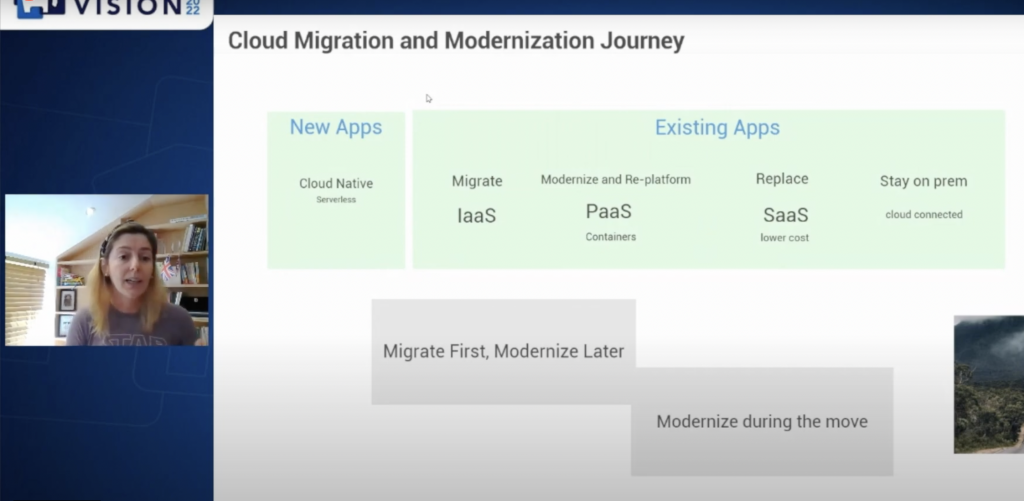

Over the course of this discussion, Paula Berenguel, Principal Engineering Architect at Microsoft, assures us that database migrations to Postgres are not dead, and are still a very big deal. She says there’s a huge number of organizations moving their databases to the cloud, and in her efforts to assist these customers, Berenguel discovered the specific steps that make migration more successful.

When she started working in migrations in 2013, Berenguel says customers were slow to migrate. Then Covid happened, and every customer who was cautious about coming to the cloud because of security concerns, or lack of budget, or timing issues had to move more quickly.

Berenguel says about her job, “We’re trying to help customers desperate to come to the cloud and desperate for every platform so they can offer better products to their own customers. That’s the main reason people migrate… there’s a business reason behind it, and Covid accelerated this.”

Designing your ideal Postgres migration

While there’s no single way of adopting Postgres, Berenguel says there is one step-by-step methodology that helps customers get there a bit quicker than other approaches.

In a heterogeneous migration going from Oracle to Postgres or another database to Postgres, the ideal methodology starts with discovery. Berenguel defines discovery as helping the customer to know what they don’t know yet—whether that’s applications running particular schemas or what certain servers are doing or something else.

Assessment comes next, which involves going deep into the database engine and understanding the size of the database, how many CPUs there are, the RAM that’s required to run an application, the integration points, the amount of tables, functions, packages, PostgreSQL triggers and more. If everything is running inside that database, you’d want to understand how difficult it would be to convert it all to Postgres.

After assessment, Berenguel says the steps are pretty logical. Basically you’d convert the schema, tables, functions, procedures, packages and everything else to its Postgres form. Following that, you’d conduct the actual migration. This involves synchronization of data, then testing and optimizing.

These are the steps Berenguel outlined for a heterogeneous migration:

For a homogeneous migration, when the data goes from Postgres to Postgres due to a version change or some other reason, Berenguel says the steps are similar but simpler.

“If you know all the variables and all the data points in order to get a workload from A to B, for either modernization or a migration, you’re going to be successful,” Berenguel says.

Lean on migration tools

As for tools to help with Oracle to Postgres migrations, ora2pg is the one Berenguel’s team uses the most because it’s open source, it’s freeware, it has decent documentation and it’s very advanced.

Berenguel says PG_dump and PG restore can be used on the homogeneous side to move data from Postgres to Postgres, though a Change Data Capture (CDC) tool might be needed for streaming the data from a to b.

She also mentioned that it’s critical to consider the tools and the platform you’re using, and to understand their limitations. For example, before migrating a 100 terabyte Postgres server to another vendor, you’ll want to be sure the new platform can accommodate 100 terabytes. ”There’s a huge combination of tools, conversion and target platforms that need to be known in order to make your project successful,” she emphasized.

Key takeaways

Berenguel learned the following lessons when doing migrations:

• Draw an architecture diagram with target state and migration architecture: Drawing an architecture diagram can make your project more successful. It doesn’t have to be a complicated diagram, but it should show the target state and what the migration architecture will be. An architecture diagram can be critical for disaster recovery, high availability and security.

• Undertake a detailed migration study: When you do a migration study, make sure to ask as many questions as possible. Among other things, you’ll want to find out if data is compressed so it doesn’t expand during migration and surpass the storage limits of the new platform. You’ll also want to know which Postgres version is running so you can perform a pre-upgrade if needed.

• Become familiar with migration technology and techniques: You’ll want to understand parallelism, CDC, partitioning, database mapping and more. It doesn’t hurt to run a quick profiling on the source data so you know if your data mapping is correct.

• Don’t over or underestimate tools; keep reading the docs: The right tools can streamline complex migration tasks. In one of Berenguel’s jobs, it was taking 42 hours to migrate one table of 500 GB to Postgres. She reached out to her team for help and a team member introduced her to a configuration tool that accelerated the migration of images. Migration time was cut from 42 hours to 7 hours—a massive improvement. That’s why Berenguel believes you should never underestimate what tools can do. In addition, she emphasizes to keep reading the documentation to stay on top of changes.

• Rely on Postgres community extensions: Berenguel mentioned one important extension called orafce, which allows you to not have to convert as much. BigAnimal from EDB is another resource which has built-in native Oracle functions already compiled into its engine, which can make life very simple for those migrating to BigAnimal.

• Be flexible: Berenguel stressed the importance of being flexible, and how there’s always something new to learn.

Berenguel left us with the thought that digital transformation is shifting from cloud first to cloud everywhere. With 96% of enterprise data still stored on-premises according to Gartner, Berenguel believes there will be jobs in migrations for the next 20 years. Especially for those who “know their stuff.”