Postgres Monitoring, Enterprise Style: Ensure Your Database is Healthy and Fit for Any Challenge

You don't know what you can't see

When you speak with a senior professional in the arts of database management, there is one phrase that can be heard the most: "Boring is good." What this means in essence is that predictability is key when it comes to ensuring continuity.

From what we see in the market, and as you have been able to read in various publications from EDB and other database fanatics, extreme high availability for database platforms is somewhat of a holy grail. As businesses continue to broaden their "opening hours"–and need their applications to support these—database downtime is no longer acceptable.

This means that there is an enormous pressure on the operation of databases such as Postgres. And then, the age old dogma kicks in, you do not know what you can not see. To be able to safeguard Postgres availability, you need to have a solid understanding of what is happening in and around your precious data processing platform. If you do not have that knowledge, how can you anticipate and predict risks for availability? I compare this to low-flying, with a fighter jet, in dense fog, through the mountains. I do not predict you would feel very comfortable, so why should you be comfortable doing that with your database?

Domain specific monitoring

There are a multitude of monitoring solutions out there. And most of these have their own area of focus.

Small

Some are small and agile, aimed for projects that need a lot of flexibility. They have a lot of different extensions and the ability to query each and every resource in your organization that has any form or ability to answer. This can traditionally range from servers and network devices to printers and workstations. Besides that, these tools might have capabilities to extract information from doors, alarms systems, fire alarms, and what have you. Extremely useful when you have some more exotic things you want to keep an eye on.

Big

Others are extremely comprehensive and elaborate. Especially the larger Enterprise Monitoring solutions that are designed and built to function in large enterprise environments. These solutions allow for tailoring, authorization, escalation, integration, and alike, simply because the teams that work with this solution are large and diverse.

PEM

And there you have it. Should your Postgres installation fit in the first, or should it fit in the second category? Bottom-line is that it can be both. If we then think back to our first paragraph, what would we be missing, and what would we have to start building and collating to have the full picture?

Introducing Postgres Enterprise Manager (PEM) might then just be the right thing to do, as PEM has all the experience of years of Postgres operation incorporated. Because of this—what I refer to as domain specific knowledge of Postgres—most aspects that play a role in securing Postgres are covered in PEM, out of the box. Further to the call, "you don't know what you can't see," PEM still offers you the ability to create your own specific queries, or probes as they are called, to ensure you gather a full understanding of the health and wellbeing of your specific Postgres implementation.

PEM in that case is "small and agile," giving you the flexibility to focus fully on Postgres. Additionally, PEM allows you to integrate and become a seamless part of any potential large Enterprise Monitoring framework. Ranging from SMTP traps to webhooks, including full authentication and authorization using standard Role Based Access Control (RBAC) functionality, the domain specific knowledge of Postgres becomes part of that all-encompassing system.

Administration on the next level

And with monitoring being one side of the story, administration is the other. Administration not only to help implement preventive measures for disruption, but also administration from a so-called "day two operations" perspective.

Ranging from creating users (or roles as they are called in Postgres) to storage management. From managing -the partitioning of- specific tables in your database, to initiating a role-switch between your primary and your standby Postgres database. These operations might be things that you do sporadically and might also be things that you do not necessarily have scripted in your automation framework.

Even though in the past I have argued that graphical interfaces might not necessarily be a one-size-fits-all solution, there is a distinct time and place where a tool like PEM addresses a crystal clear need. As the working area of Postgres broadens, in some cases Postgres Enterprise Manager might just become the fiery sword that helps the DBA cut through the intricacies of ensuring that the applications stay available and performant, no matter what happens.

Postgres Enterprise Manager continues to evolve

The development of Postgres continues with relentless pace. To keep up with all the new things that come available, EDB continues to focus on the expansion of Postgres Enterprise Manager to help fulfill the promises described above. On September 12th of this year, EDB released Postgres Enterprise Manager version 8.2 as part of this continued commitment.

The following features are new in PEM 8.2:

- 2 phase authentication

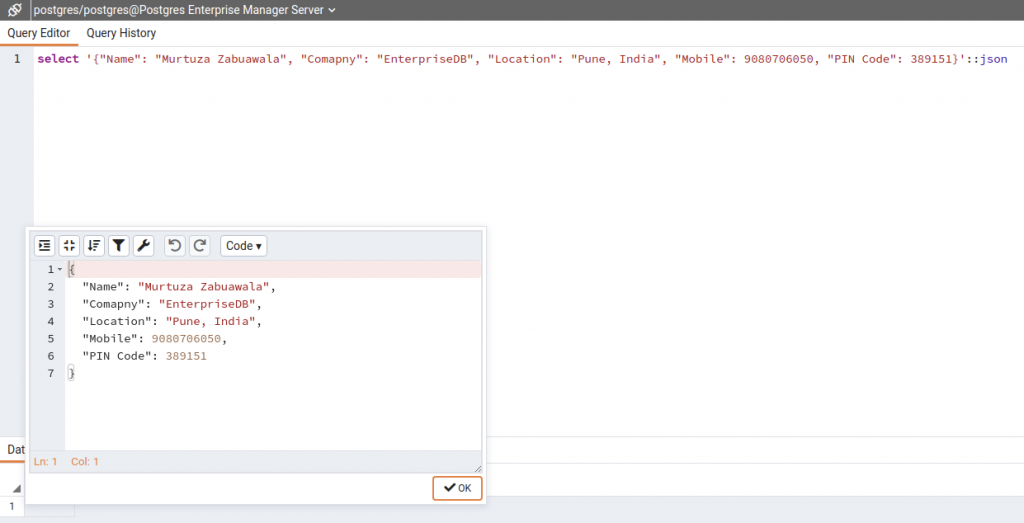

- Formatted JSON editor

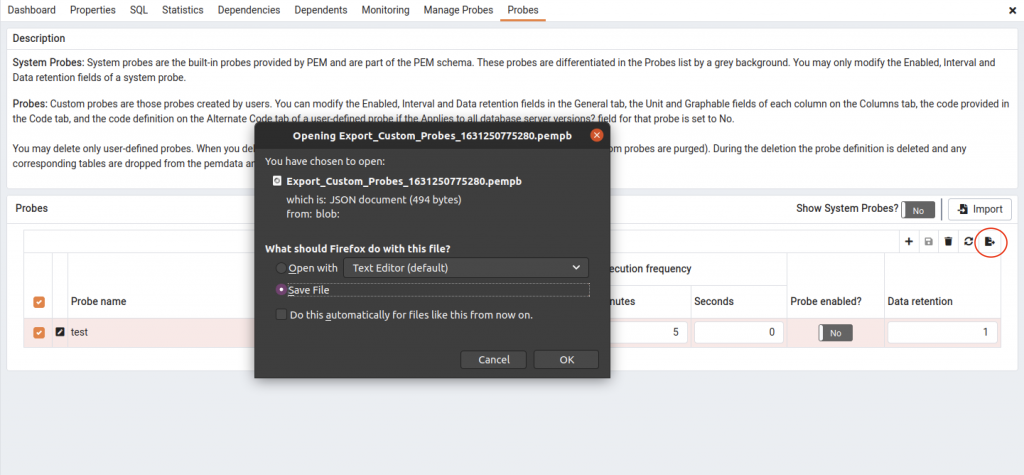

- Import / export of custom probes and alert templates

- PEM Server HA

Conclusion

Monitoring and alerting is as old as IT itself. Ensuring we know what is happening in our environments is critical to ensuring people can continue to benefit from its services.

With the broadness of today's solutions, paired to the in-depth knowledge required for certain areas, like databases, the challenge is on to come up with a good solution.

Enterprise monitoring and alerting systems fill an overarching requirement, where domain specific, and specialized tools like Postgres Enterprise Manager have the capability to dive deep in matter and exchange information with these enterprise monitoring solutions. With this, you can build a comprehensive solution to ensure you're always in control!

Want to see more? Check out EDB's PEM.